Continuous Intelligence: Rethinking the Architecture of AI Systems

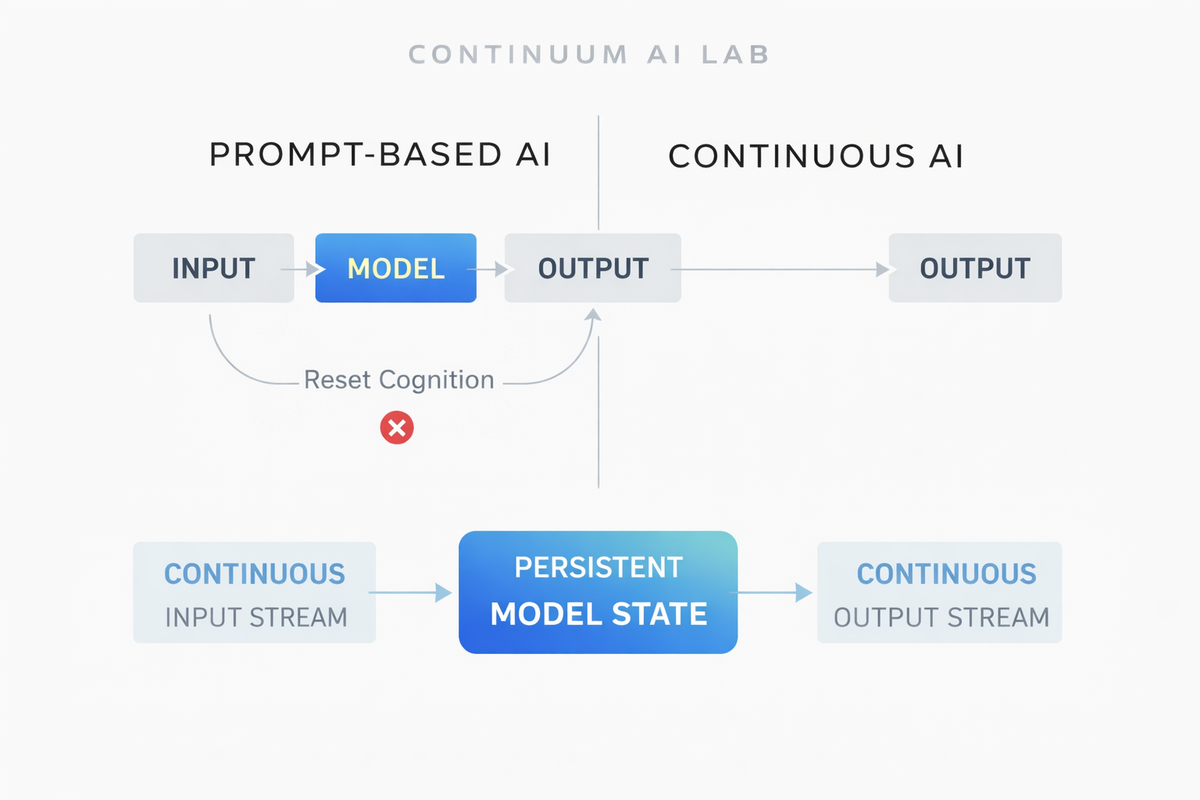

Most modern AI systems are built around a simple paradigm: a model receives an input, generates an output, and then resets. This request–response architecture has proven highly effective for a wide range of applications—from text generation to code synthesis and multimodal reasoning.

However, this design reflects an engineering constraint rather than a fundamental property of intelligence.

Human cognition does not operate as isolated request–response events. Instead, it unfolds continuously over time. Humans observe their environment, integrate new information with prior knowledge, update internal representations, and act in an ongoing process of perception and reasoning.

As AI systems become increasingly capable, the limitations of the traditional prompt-based paradigm are becoming more apparent. Many emerging applications—from autonomous agents to real-time decision systems—require models that operate continuously rather than episodically.

At Continuum AI Lab, we are exploring an alternative paradigm: continuous intelligence.

The Limits of Request–Response AI

The dominant architecture for modern AI systems can be summarized as:

Input → Model Inference → Output → Reset

This design has several advantages. It simplifies scaling, parallelization, and infrastructure deployment. It also aligns well with API-based software architectures.

However, it introduces fundamental limitations.

1. Lack of Persistent Context

Most AI systems do not maintain long-term internal state. While external memory mechanisms can approximate persistence, the core model itself typically operates without a continuous internal representation of its environment.

2. Episodic Cognition

Because each interaction is treated as a separate event, models are unable to develop evolving internal beliefs about the world. This makes it difficult to support tasks that unfold over extended time horizons.

3. Reactive Rather Than Proactive Systems

Prompt-based systems only act when explicitly queried. This restricts their ability to monitor environments, anticipate events, or operate autonomously.

These limitations become increasingly important as AI systems move beyond static tasks and toward long-running autonomous agents.

A Different Paradigm: Continuous Intelligence

We propose an alternative architectural principle: AI systems that operate continuously rather than episodically.

In this paradigm, models are designed to process streams of input and output over time rather than isolated prompts.

Instead of:

Prompt → Response

the system behaves more like:

Continuous Input Stream

↓

Persistent Model State

↓

Continuous Output Stream

In other words, the model is not invoked only when queried—it is already running.

This allows the system to maintain evolving representations of the environment, update its internal state as new information arrives, and generate outputs when appropriate.

Continuous Models

Continuous models operate on temporal streams of information rather than discrete queries.

Examples of streaming inputs include:

- sensor data

- speech

- live video

- software events

- financial data

- internet-scale information flows

The model continuously integrates incoming data and updates its internal representation of the world.

This approach aligns more closely with how biological intelligence operates, where perception, reasoning, and action form a continuous feedback loop.

Persistent Agents

Continuous models enable a new class of systems: persistent AI agents.

Unlike traditional chat-based agents, persistent agents remain active over long periods of time and maintain evolving internal state.

Such agents may:

- monitor complex systems

- conduct long-running research processes

- manage software infrastructure

- coordinate workflows across organizations

- adapt strategies based on ongoing observations

Because these agents operate continuously, they can reason across long time horizons rather than single interactions.

Architectural Implications

Moving from episodic inference to continuous intelligence introduces several technical challenges.

Key research areas include:

- Streaming Model Architectures: Models must efficiently process incremental inputs without recomputing entire contexts.

- Long-Horizon Memory: Continuous systems require mechanisms for persistent memory, state updates, and forgetting strategies.

- Resource Scheduling: Running AI systems continuously requires new approaches to compute allocation and model lifecycle management.

- Safety and Alignment: Persistent agents introduce new safety considerations, including goal stability, monitoring, and controllability.

Addressing these challenges will require advances in both model design and AI infrastructure.

Why Continuous Intelligence Matters

As AI systems become more capable, many high-value applications require systems that operate over extended time horizons.

Examples include:

- autonomous research agents

- real-time financial analysis systems

- continuous cybersecurity monitoring

- adaptive robotics and control systems

- persistent digital assistants

These applications cannot be easily implemented within the request–response paradigm.

Continuous intelligence provides a framework for building systems that can observe, reason, and act over time.

Looking Forward

The shift from episodic inference to continuous intelligence may represent a major transition in the design of AI systems.

Just as computing evolved from batch processing to always-on internet services, AI may evolve from prompt-driven models to persistent cognitive systems.

At Continuum AI Lab, we are exploring the architectures, models, and infrastructure required to support this transition.

Our goal is to build AI systems capable of continuous perception, reasoning, and action—systems that operate not only when queried, but as ongoing processes embedded in the world.